Building a Data Foundation That Lasts

Building a data foundation is like giving your organization a memory. Without it, executives are forced to make decisions on incomplete information, creating risk and inefficiency. Data isn’t just an asset — it’s the institutional memory that enables experience-based, repeatable decision-making.

Table of Contents

Data as the memory of the organization

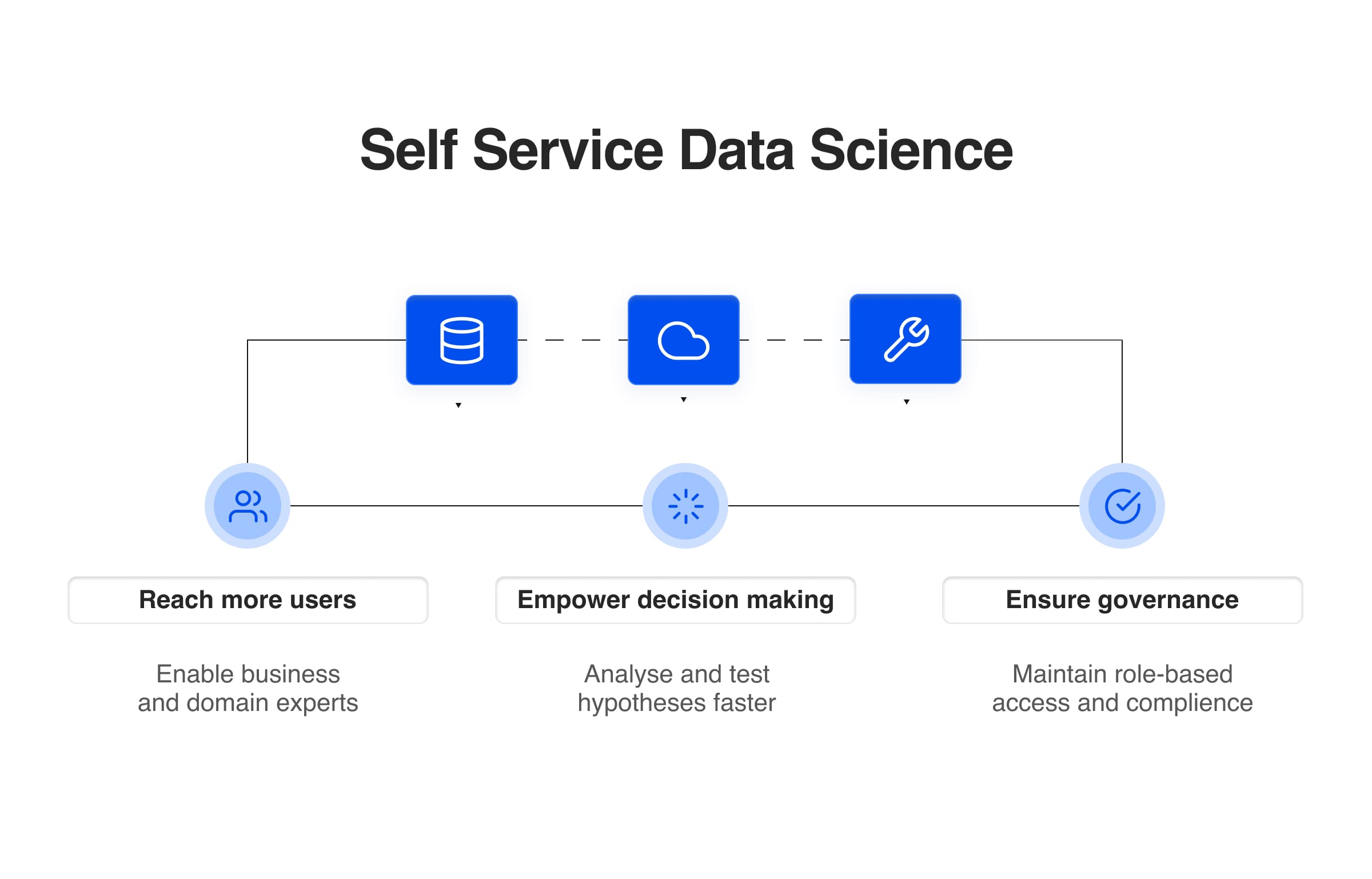

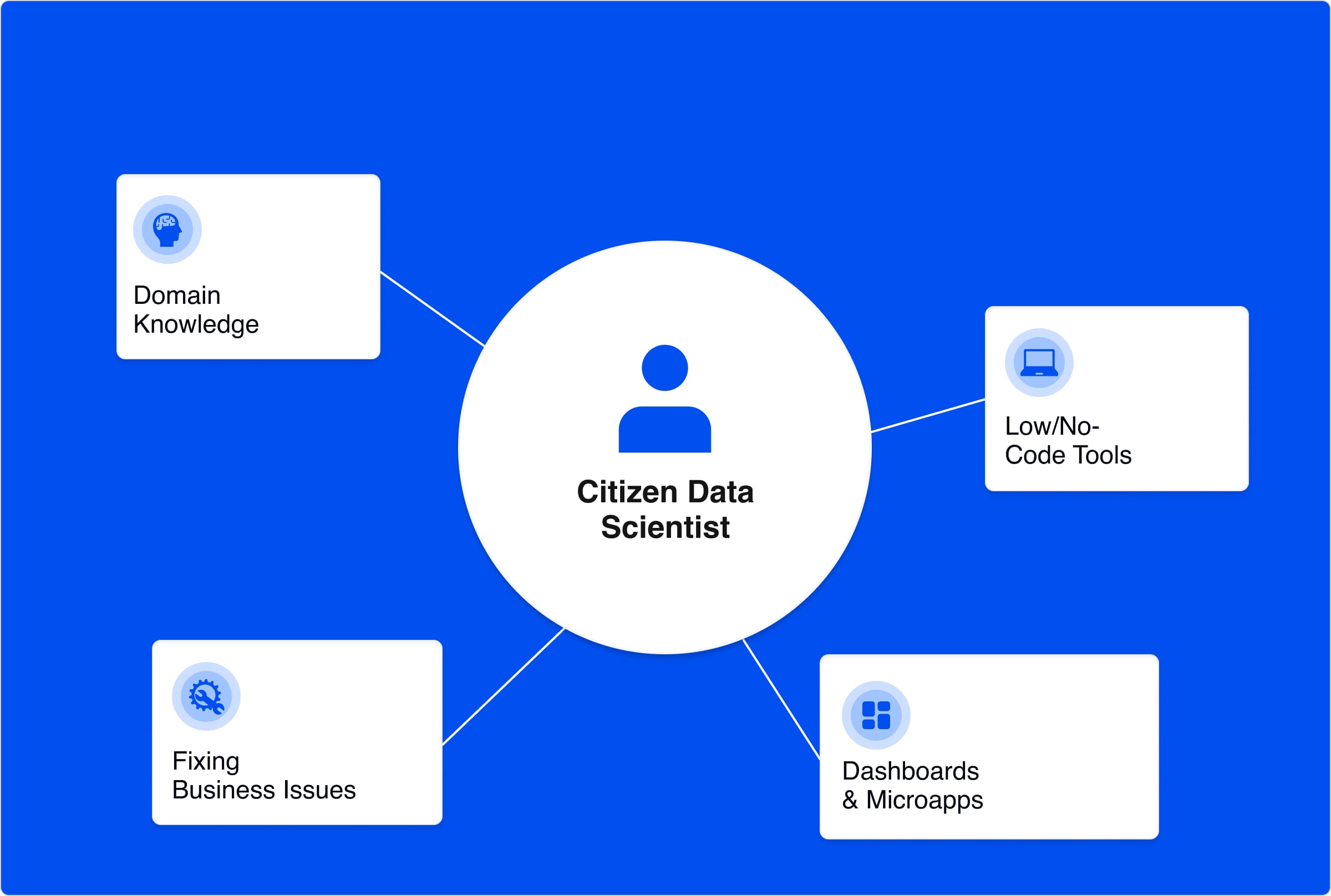

Too often, organizations see “data” as heterogeneous streams or even just anything in a digitized form. But a true foundation is more than a collection of data. It’s about giving business users the autonomy to define formats and schemas about the data they know, while embedding feedback loops that nudge toward standardization. Done right, the data foundation becomes the premise for every future product and insight, the compound interest that pays off over time.

And here’s the good news: it’s never too late to get started.

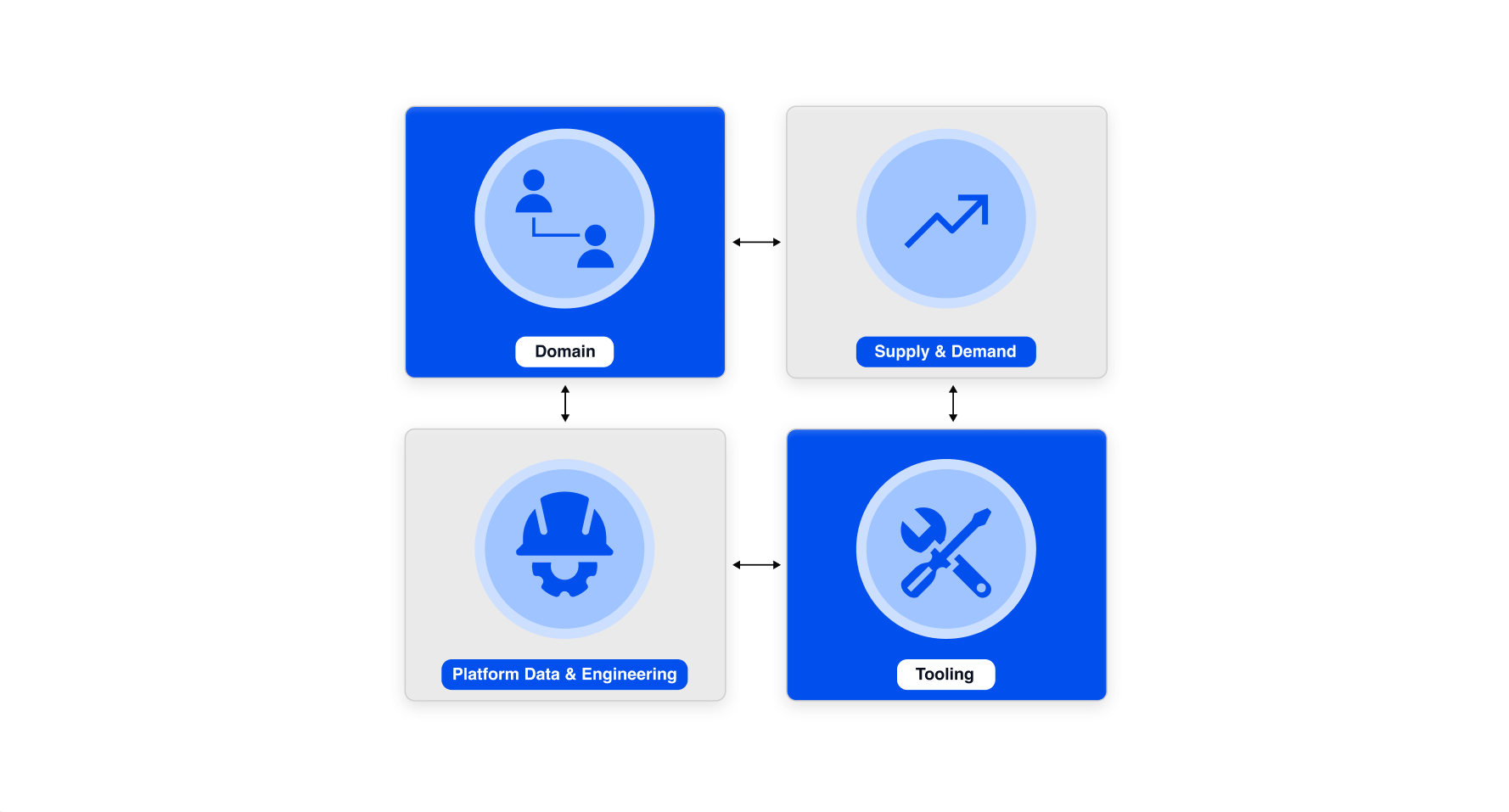

Beyond technology: The organizational dimension

It’s tempting to treat data frameworks as a purely technical challenge. They’re not. The harder, more decisive factor is business alignment. Data is always created in context, and unless that context is respected, governance models quickly drift into either irrelevance or bureaucracy.

Executives should avoid two traps:

- Over-centralization, which turns governance into an academic exercise.

- Over-federation, which leaves standards fragmented and fragile.

McKinsey frames this well: “data governance programs often become a set of policies relegated to a support function, executed by IT and not widely followed.” They argue governance must be re-imagined as a value driver rather than a compliance or control function.

Culturally, leaders must remember: idiomatic data work is resistant to change because it already fits existing processes. Change management must therefore highlight direct payoffs to business users. Strategic goals belong in board decks; adoption depends on making people’s daily work easier. Champions in business units, targeted training, and KPIs tied to new insights accelerate adoption.

Data Foundation Implementation: Where Success or Failure Happens

Implementation is where visions live or die. The differentiator is not architecture diagrams but project-based, iterative rollouts with feedback loops. By delivering immediate value aligned with business workflows, you anchor adoption early and build momentum.

As Bode et al. found in their empirical study of data mesh implementations, organizations that “create quick wins in the early phases” are more successful at anchoring adoption and navigating governance transitions.

Executives should be wary of common pitfalls:

- Consultancy overreach: Outsourced builds without internal ownership often leave “orphaned” products that take years to internalize.

- Illusory accelerators: Quick wins from consultants rarely survive the handover into business processes.

- Detached leadership: Project leaders without business insight disconnect solutions from reality.

- Lack of commitment mechanisms: Asking business leaders to draft whitepapers surfaces complexities early and secures buy-in, sometimes by sunken cost fallacy, but more importantly by creating ownership.

Balancing speed and sustainability is a delicate act. The key is to solve real business pains immediately while ensuring solutions rest on domain-aware architecture and robust enablers. Without those foundations, external dependencies will delay you, no matter how good the initial delivery looks.

Executive Lessons for Building a Lasting Data Foundation

If there’s an executive playbook for data and metadata frameworks, it boils down to three lessons:

- Adopt an iterative, pragmatic approach. Deliver continuous value and don’t let ivory-tower architects or compliance stalls block progress.

- Respect the implementation phase. Building software is the easy part. Ensuring people use it is the true test. Treat internal adoption like a startup treats desperate customer acquisition.

- Engage with humility and purpose. Spend time observing, talking, and learning from business functions. Pair that humility with a clear vision of why you’re building the platform.

Emerging Technologies and the Future of Data Foundations

Technologies alone will not solve your problems. Contextualization is what wins.

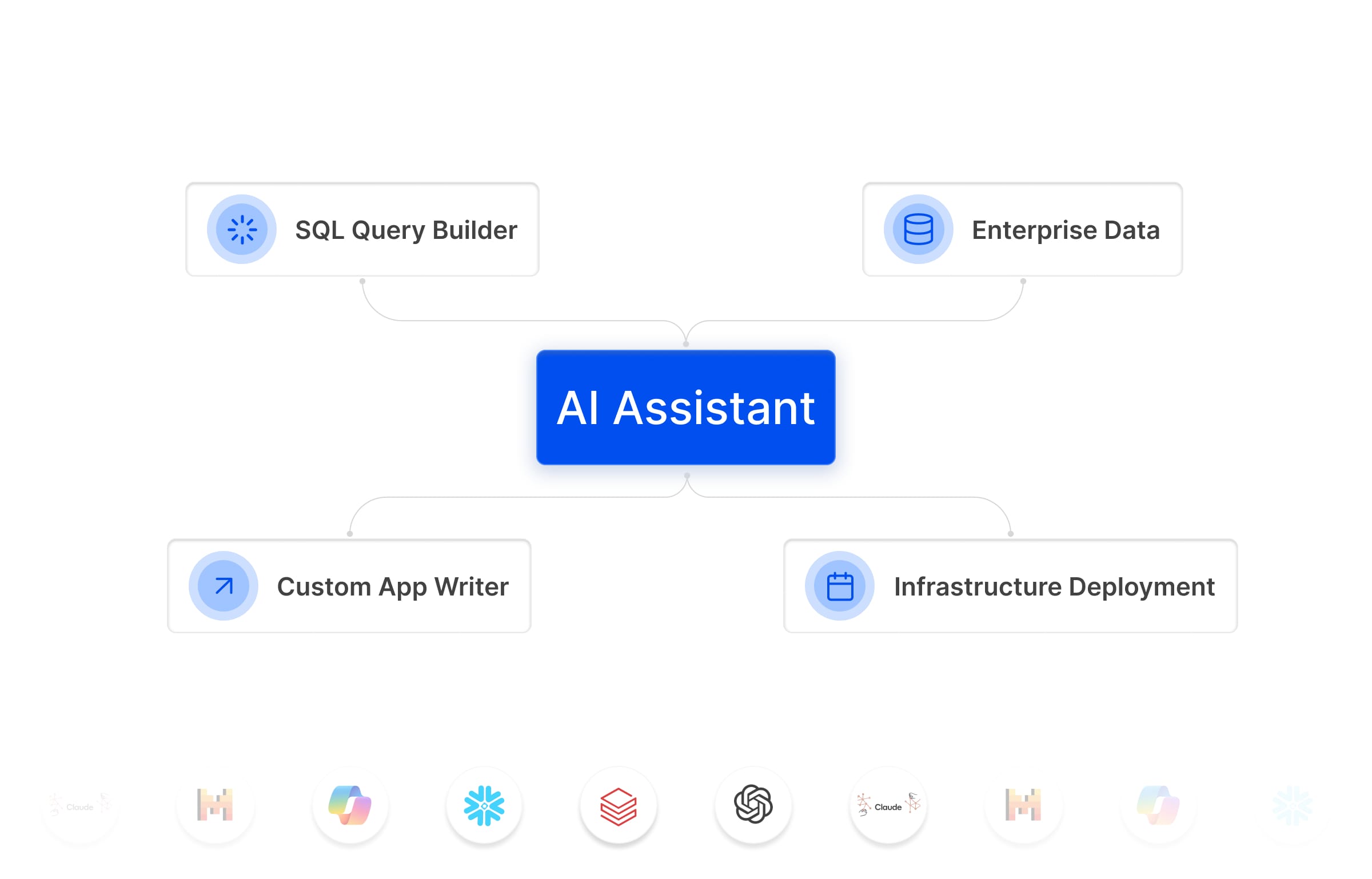

That being said, with new technologies, the opportunities are real. Generative AI can automatically extract metadata, run quality checks, and provide conformance reports that save business users hours of manual work. Data catalogs and portals can make information discoverable and actionable, if a dataset request drops the user directly into an environment where they can interact with it, engagement soars.

These technologies can be transformative, but only when integrated into a contextualized data foundation that reflects the organization’s real processes.

Getting Started with a Data Foundation

Executives don’t need to overhaul their organizations overnight. Start with three initiatives that will build momentum and compound value over time:

- Anchor to Immediate Business Pains

- Identify where poor data slows down decisions and deliver targeted fixes that demonstrate value quickly.

- Stand Up Central Enablers

- Establish shared services,authentication, authorization, metadata registration,that make it easier for business units to align without heavy-handed control.

- Select and Empower Champions

- Nominate business unit leaders as champions, give them training, and tie their KPIs to insights generated through better data practices.

Reach Out to us Today

If you’re working through a data transformation now or have thought about building a data foundation, I’d love to hear your lessons learned (anonymously if needed). Let’s share what’s working and what’s not.

FAQ's

What does it mean to build a long-lasting data foundation?

Building a long-lasting data foundation means creating a stable, governed and scalable environment where data can be securely accessed, reused and trusted across the organisation. It is not a single project, but a continuous capability that supports analytics, AI and operational decision-making over time.

Why do many data foundation initiatives fail?

Many data initiatives fail because they focus only on technology and neglect people, processes and governance. Without clear ownership, consistent access patterns and the right platform, organisations accumulate technical debt, duplicate efforts and struggle to deliver reliable, reusable data.

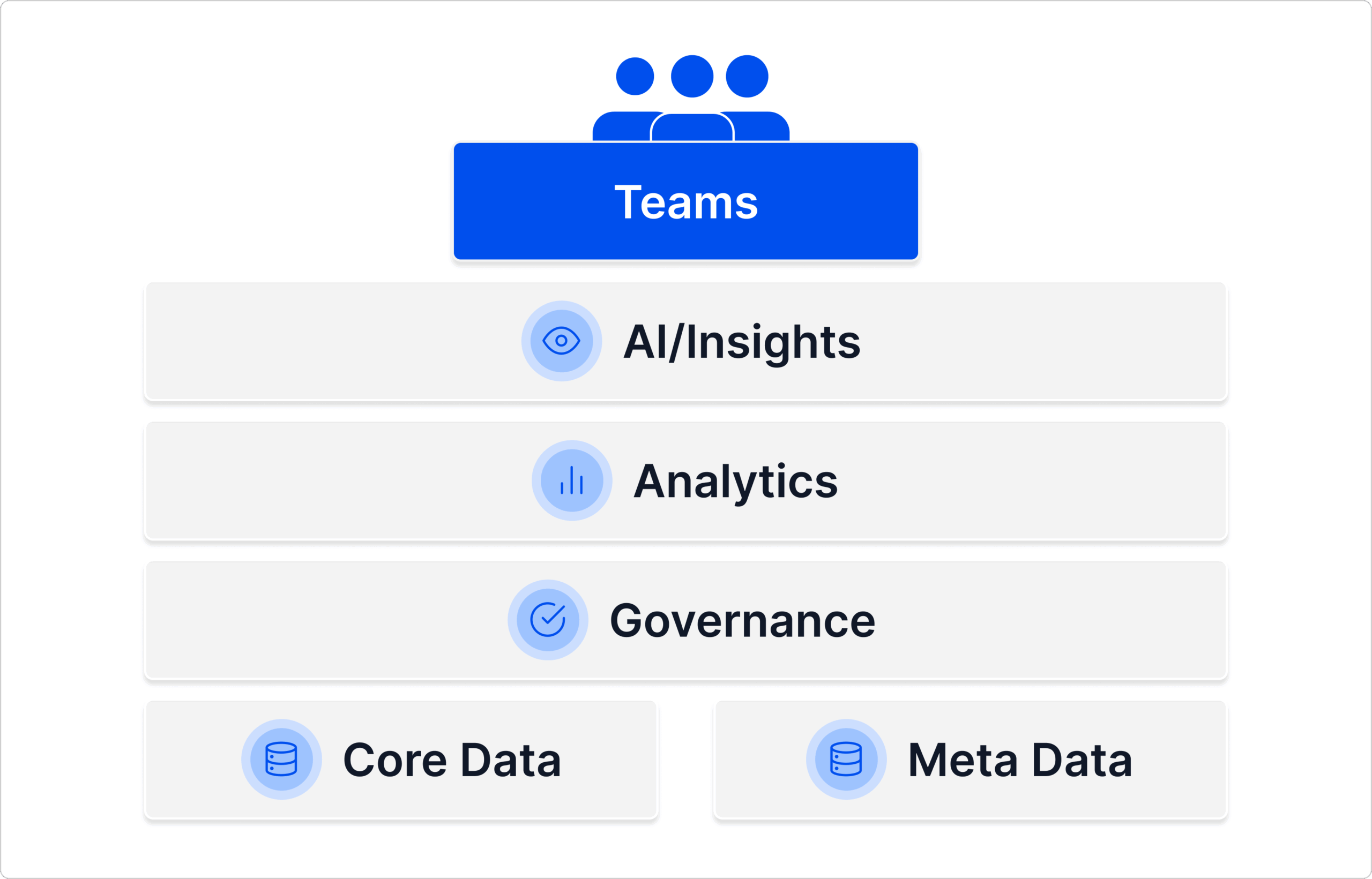

What are the key components of a modern data foundation?

A modern data foundation includes governed data access, identity-based authentication, consistent tooling, standardised environments, clear data ownership and reusable components for analytics and AI. Together, these elements ensure that teams can work quickly while maintaining security and reliability.

How does governance support a sustainable data foundation?

Governance ensures that the right people have access to the right data with consistent rules and monitoring. It provides transparency, guards against misuse and supports compliance. Strong governance helps organisations scale analytics safely without creating shadow systems or conflicting sources of truth.

Why is interoperability important in a data foundation?

Interoperability ensures that data, tools and systems can work together without custom integrations or manual data movement. When organisations rely on interoperable components, they reduce complexity, increase reuse and make it easier to evolve their data architecture as needs and technologies change.

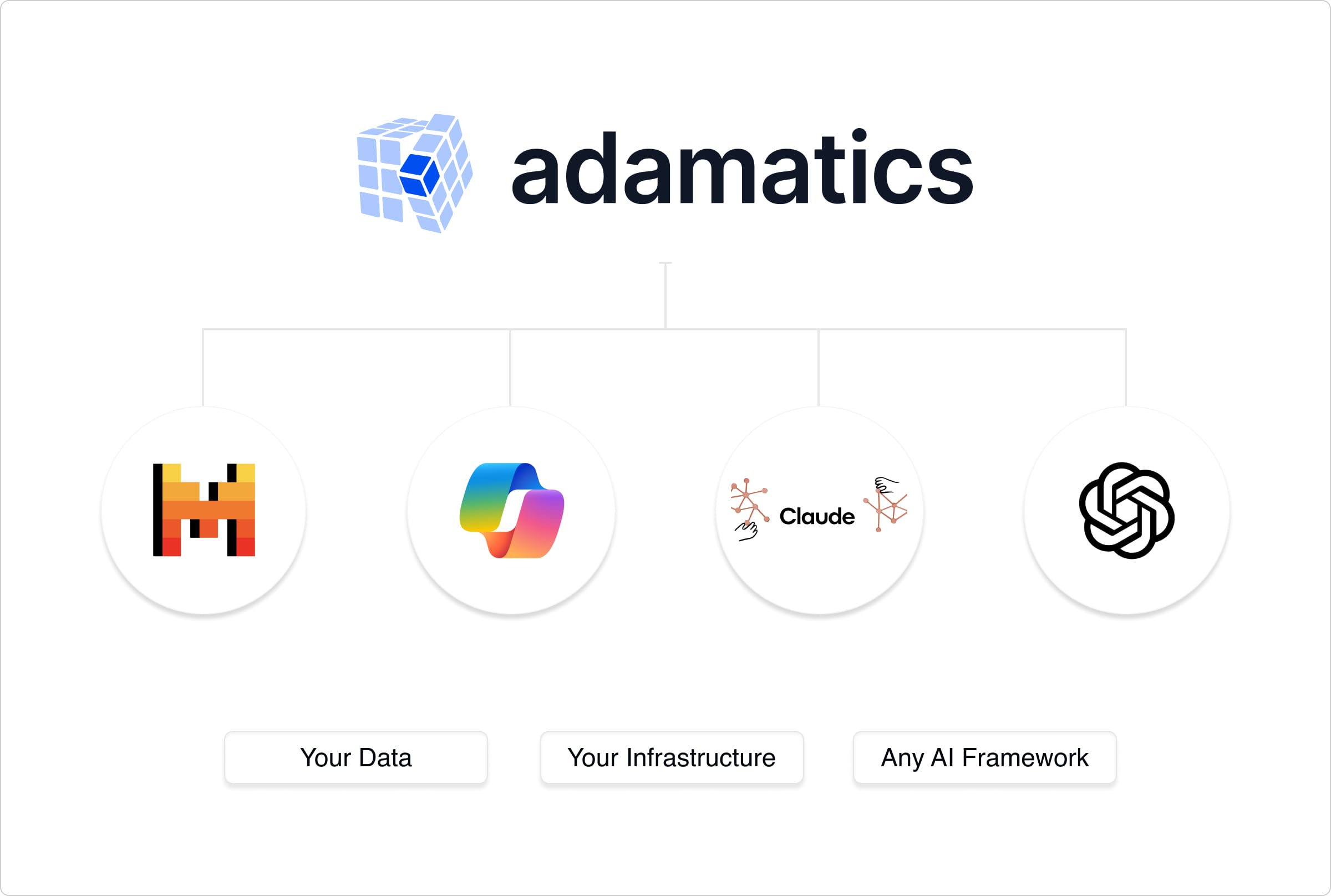

How does the Adamatics platform enable a strong data foundation?

The Adamatics platform provides governed access through the Integration Layer, consistent containerised environments, identity pass-through, and shared templates for analytics and AI. It supports a sustainable data foundation by reducing friction, centralising best practices and enabling teams to build reliably on top of existing systems.

How can organisations get started with building a durable data foundation?

Organisations can start small by identifying key datasets, standardising access through governed APIs and providing shared environments for analytics. By enabling early wins and expanding gradually, they can build a foundation that improves over time rather than attempting a large, high-risk transformation all at once.